Data Infrastructure for Device and IoT Tracking

Data Infrastructure for Device and IoT Tracking

For When You Can't Afford an Outage

Device and IoT tracking requires reliable, scalable data infrastructure.

Our approach to data engineering provides high performing, fault tolerant, fully managed Streaming, Storage, and Search for technology companies.

Improve Performance, Decrease Costs

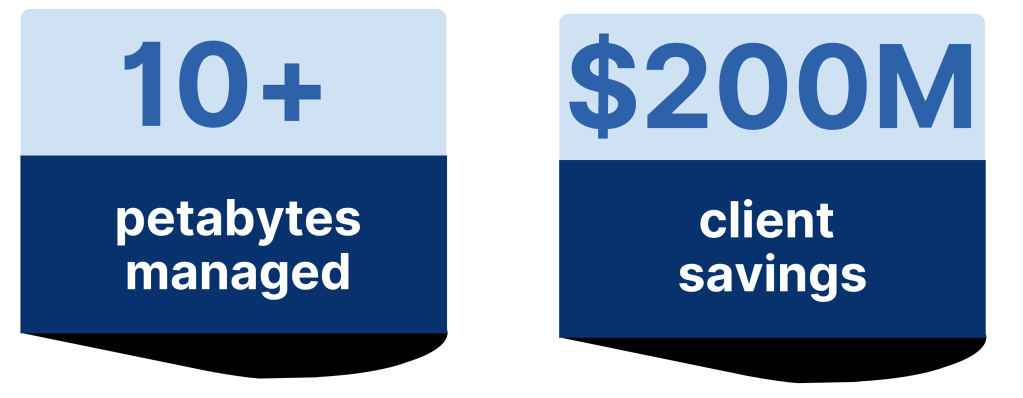

We’ve saved our clients over $200M on their data infrastructure.

Software Costs.

Moving to free Elasticsearch or migrating to Opensearch are both trusted alternatives to paid software that are completely free. This alone saves many clients $1M or more per year.

Hardware costs.

Another example is poorly written queries. A bad query can use 10,000% more hardware.

Business Losses.

Technology companies need their data infrastructure online and collecting 100% of incoming data at all times to avoid costly business losses. Expertise is needed to ensure a reliable data infrastructure.

Improve Performance, Decrease Costs

Improve Performance, Decrease Costs

We’ve saved our clients over $200M on their data infrastructure.

Software Costs.

Moving to free Elasticsearch or migrating to Opensearch are both trusted alternatives to paid software that are completely free. This alone saves many clients $1M or more per year.

Hardware costs.

Another example is poorly written queries. A bad query can use 10,000% more hardware.

Business Losses.

Technology companies need their data infrastructure online and collecting 100% of incoming data at all times to avoid costly business losses. Expertise is needed to ensure a reliable data infrastructure.

Components of IoT Data Management

Components of IoT Data Management

IoT monitoring requires that all data is received, clean, and quickly retrievable. To ensure these needs are met a company’s data infrastructure should have the following:

- 24x7 monitoring & on-call

- Fault tolerant system to handle spikes in data

- Data delivery semantics, for instance exactly once or at least once delivery

- Custom optimization of your data infrastructure

- Cross-cluster replication to prevent data loss and decrease latency

Device Monitoring Data Engineering Needs

Device Monitoring Data Engineering Needs

Real-Time Data Streaming

Data collection and ingestion from IoT devices requires communicating with hundreds, thousands, and sometimes even millions of individual data sources.

A well-designed ingestion system can handle diverse data formats, manage high volumes, and allow data engineers to implement pre-processing and filtering techniques to remove irrelevant data early in the pipeline.

For data collection we use either Apache Kafka or Apache Pulsar, depending on a client’s needs. Both of these software are widely used and extensively tested. They are both also free and open source. In other words, our clients do not pay any license fees to use these platforms.

Additionally, we guarantee 99.99% uptime for data streaming in production. Our clients trust in us to guarantee 100% of their data is collected.

Data Storage and Search

Data storage is where raw and processed data is kept for further use. We use either Elasticsearch or OpenSearch, depending on each client’s individual needs and preferences. Both Elasticsearch and OpenSearch are horizontally scalable to handle large workloads and spikes in data. For instance, some of our clients are ingesting terabytes of data each day.

We also employ hot, warm, and cold storage classifications to suit each use case.

Additionally, both of these software are search engines. For our clients we emphasize smart categorization of data and fast retrieval.

Real-Time Monitoring and Alerting

Continuous monitoring of data infrastructure is critical to identify emerging issues. This early detection allows emerging issues to be resolved before causing a performance issue.

Security and Privacy Management

IoT data can contain sensitive information. This information requires strict security measures. Our engineers implement data encryption, role-based access controls, and secure data storage solutions to protect against unauthorized access.

Expert Management of Data Architecture

Expert Management of Data Architecture

Data engineering workflows require expertise to be set up and managed correctly. Misconfigurations, inadequate scaling, and inefficient workflows can lead to costly errors and downtime.

For many businesses, working with an external expert company for data infrastructure management is the best choice. Here’s why:

Cost Savings.

Poorly architected data pipelines can lead to inefficiencies and unexpected costs. We will optimize your data pipeline, improving performance and reducing infrastructure costs. For instance, a single bad query can result in 10,000% more hardware usage.

Time Efficiency.

An experienced team can set up and manage complex data pipelines faster than an internal team learning on the job. This frees up time for businesses to focus on their core activities.

Scalability and Reliability.

Scaling data pipelines to handle increasing data loads requires deep expertise. We ensure our clients’ systems are scalable, reliable, and capable of handling growth as data needs expand. See our SLA overview below.

Security and Compliance.

We are well-versed in best practices and compliance standards across industries. This ensures that your data engineering pipelines meet regulatory requirements.

Case Study: Device and IoT Tracking, 900 TB per day

Case Study: Device and IoT Tracking, 900 TB per day

Challenge

A technology company needed to monitor hundreds of thousands of vehicles’ driving performance. As close to 100% of data as possible need to be retained to develop machine learning techniques that predict profitability.

The client needed real-time alerting and dashboards. The system needed to be optimized for low latency and durability.

Solution

We worked across teams to deploy log collection and producing programs for all devices.

We created unique pipelines for devices, such as firewalls that do not support producing directly to their streaming platform. We implemented security both in transit and at rest for the entire pipeline.

We used Apache Kafka for streaming and Elasticsearch for monitoring. Real-time monitoring and alerting were built to track performance and detect emerging issues.

Current Situation

Our engineers continue to manage the architecture, including preventative maintenance, upgrades, scaling, and 24×7 uptime support.

The company hasn’t had a Kafka outage in the 5 years that Dattell has managed their data infrastructure. And we’ve seamlessly supported their 10-fold growth over the same time period.

Current Situation

Our engineers continue to manage the architecture, including preventative maintenance, upgrades, scaling, and 24×7 uptime support.

The company hasn’t had a Kafka outage in the 5 years we have managed their data streaming platform. And we’ve seamlessly supported their 10-fold growth over the same time period.

24x7 Data Engineering Support & Consulting

24x7 Data Engineering Support & Consulting

24x7 Data Engineering Support & Consulting

Visit our OpenSearch page for more details on our support services.